The Compounding Software Factory 📈

The third and final episode of our software factory series!

Hey there, Luca here!

Today I am back with the third and last article in our Software Factory series, written together with my friend Rob Zuber, CTO at CircleCI.

Before this one we published:

Why three articles? Because we like to work from first principles:

In the first article we established (with actual data) that teams that are getting far with AI are doing so thanks to good DX. The same teams that had good DX three years ago, are now winning the AI race: those at the 90th percentile are shipping >2x faster than before.

In the second article we said: ok, but once you have good DX, how do you create leverage with AI? And we laid out a plan in three steps:

📋 Specs — start by writing good, detailed instructions about things to be done. E.g. “create a button that has the following specs […]”

📚 Rules — turn specs into reusable rules for the AI to follow. This is when you can say to the AI: “create a button using our rules for creating buttons”.

🧩 Modules — is the final stage, when AI can reuse code directly, instead of rules, and you can simply say: “create a primary button”

This seems good enough, so why a third article?

Because if you do all of the above right, your outcome will be: a solid dev process, in which AI produces good enough work to be shipped.

Now, while this seems like an ambitious target already, I still think we should aim higher.

To understand why this matters, we need to take a step back and reflect on engineering teams before AI. Here is the agenda for today:

📉 The default path is degradation — the uncomfortable reality of engineering teams so far.

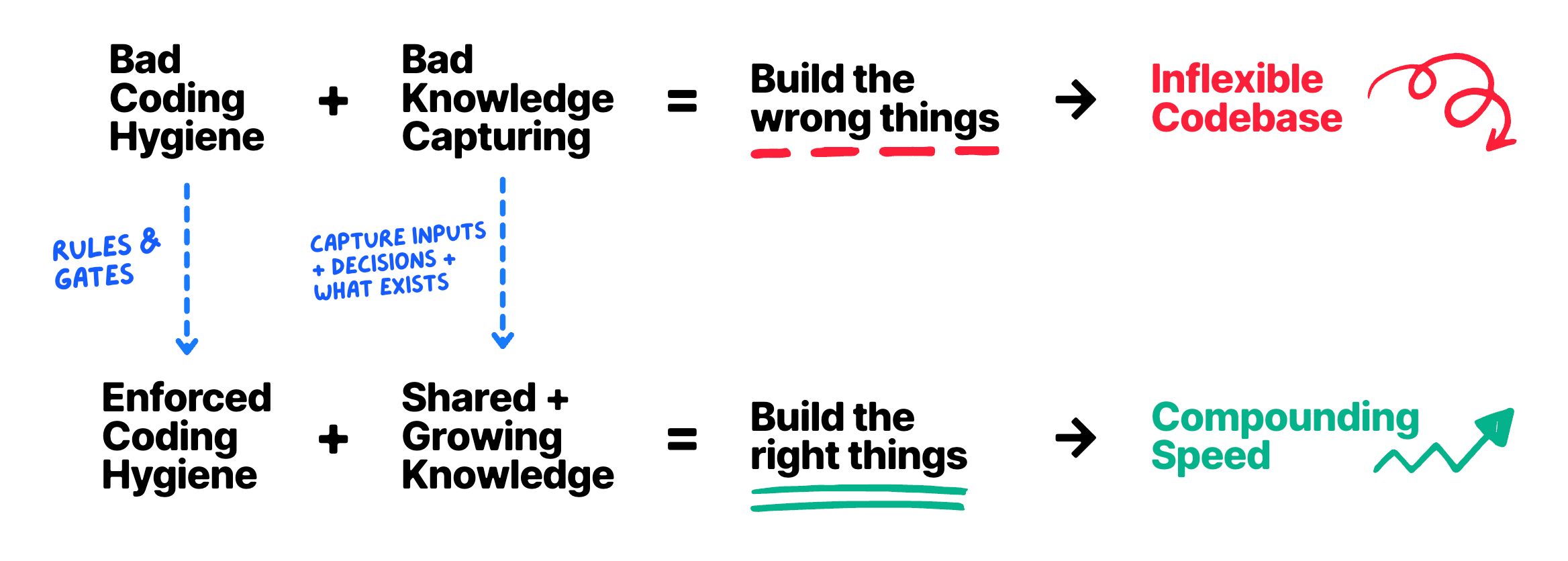

🐛 What causes teams to degrade — talking about coding hygiene, capturing knowledge, and building the wrong things.

📈 How to get better instead of worse — how to invert the trend on each of these dimensions.

🎽 Empowering managers — this is peak manager territory. Let’s talk about what managers should really do today in the age of AI.

Let’s dive in!

As always, I want to thank Rob and the great CircleCI folks for partnering on this piece. I loved bouncing ideas off with them, and I am a fan of how they are trying to reinvent software validation for the AI era. You can learn more below 👇

Disclaimer: in the article I only wrote my unbiased opinion about all practices and tools mentioned, CircleCI included

📉 The default path is degradation

Anyone who has worked in human software teams for years — teams that work on the same codebase over a long period of time, teams that grow, shrink, rotate, and do all the things that human teams do — knows that the default trajectory is not an improving one. It’s not even staying put.

Over time, the default is getting worse.

Codebases become a mess, large teams ship less than small teams (per engineer, but often as a whole), and products get shitty.

There are exceptions of course, but this is the reality of engineering for probably 90% of teams.

I think it’s exactly this bias, plus the fear of losing control over code, that makes us happy with AI just getting things right. Good enough. If we get moderately faster through this, and don’t degrade over time, that’s a victory already!

And that’s true, but what if we don’t stop there? What if the default becomes that things get better over time, instead of worse?

What if, for any change, instead of asking “is this good enough to ship?” we asked ourselves: “does this change make the next easier/safer/cheaper”?

In other words, a world in which, over time:

The team gets faster — both individually and as a whole.

AI gets more accurate — because tech and product get better understood.

The codebase gets better — because of the aggregated understanding of the problem, the continuous refinement of the abstractions, and the collective time spent on making things right.

This has been discussed in recent times already, and often goes by the name of compound engineering — but it’s still unclear how to pull it off.

So today we are taking a stab at what real-world compound engineering might look like, based on the actual tools available, and our respective experience:

Rob has the privileged vantage point of overseeing the workflows of tens of thousands of teams via CircleCI. CI is the crossroads through which most software activity has to go through, so it’s perfect to spot patterns, see what works, and what doesn’t.

On my end, I have been building Tolaria for several months now (p.s. we got to 8K stars on Github!), have written 100K+ lines of code for it in over 2000 commits, and have developed strong opinions about the state of AI coding today.

🐛 What causes teams to degrade

I like to address problems by inversion, so to figure out how to make things get better, I like to start by reflecting on what makes them worse — and then avoid that!

We could spend weeks just debating this, but I’ll keep things simple and give you my top three candidates for what makes engineering teams (and products) degrade over time:

1) Coding hygiene

There is an obvious baseline of coding hygiene that helps keep things maintainable, like:

🔬 Good testing coverage — made of actual good tests (see Kent Beck’s desiderata)

🩺 Good code health — high cohesion, low cyclomatic complexity, small files, no bumpy roads, etc.

🧱 Good basic abstractions — that encapsulate domain language, instead of using language primitives all the time.

A key characteristic of coding hygiene is that it is largely domain agnostic. In fact, it can be easily evaluated by automatic tools that know nothing about your product.

It’s also, honestly, hard to maintain. Writing good tests is hard for a variety of reasons, they pose nasty tradeoffs and cause endless fights for time to market. Good code health is easier said than done, and even basic abstractions are often challenging.

So I empathize with teams that struggle with these — but nevertheless, these are the basics. What comes next is harder.

2) Capturing knowledge

If there is a single thing that compounds in life, it is knowledge. Knowledge is leverage, and teams are generally very bad at capturing and growing their collective knowledge.

First of all, they capture very little: no records of their decisions, no snapshots of what exists today (in whatever format), no vision/mission statements.

Also, most often no playbooks on how to do things: from designing features, to coding specific parts of the product, to operating systems in prod.

Again, I am not faulting anyone for this. Writing things down is hard, and keeping them updated is even harder.

3) Building the wrong thing

As a result of dubious knowledge + poor hygiene, we often end up building the wrong things.

And the more wrong things we build, the harder it becomes to steer a codebase, because of little residual design space (from bad abstractions), little confidence in changes (from little testing), and so on.

Again, these are all things that, by default, get worse with time. We know this from research. Large and old orgs, vs small and young ones, perform worse on all dimensions:

Code health is worse.

Institutional knowledge is less shared.

Product moves slower.

So how do we not only stop the trend, but invert it? Let’s take on these one by one 👇

🔬 Improving code hygiene

As a result of AI writing the code, you can—and should—enforce it to write good code.

In my experience, you can do so by combining:

Rules and skills — about what the agent should do (e.g. always write tests) and how it should do it (e.g. what makes for a good test?)

Gates — agents often simply ignore rules, so you need gates that enforce these rules. Code health above X, test coverage above Y, and so on.

There are a ton of tools today that can automatically check for anything you can think of, and I am personally a fan of integrating them both in the CI (as usual), and as local hooks (e.g. with Husky), to shift things left and let AI find problems earlier. Rob believes this is where validation infra needs to go: creating environments fast enough, real enough, and context-aware enough for agents to validate against before code reaches shared infrastructure.

There is also hygiene work that comes from regular maintenance, after the code is in prod. This is classic KTLO, from updating dependencies, to fixing small bugs, investigating failures in logs, and more.

This is all work where today AI can give you an incredible hand. What used to be recurring grunt work for your team, can turn into recurring automations that lead to PR drafts. You can create some for:

Proposing dependency updates

Scanning for security vulnerabilities, from well-known online lists

Fetch insights from Sentry and your instrumentation to auto-fix bugs before you are even aware of them

Personally, for Tolaria I have a hourly automation that:

Scans Sentry logs and Github issues reported by users

Investigates and tries to reproduce them

When considered worth it, it creates tasks for the Codex/Claude queue

Bug tasks (as opposed to features) are automatically taken in charge and a fix is drafted for me to review.

Is this perfect? No. Does it create duplicates, false positives, or simply interpret things wrong? Yes! But does it help reduce my cognitive load and effort on these? Absolutely!

📚 Capturing knowledge

AI is fantastic at summarizing, expanding, moving, and overall processing content — but only if you capture it first!

And today, frankly, you should capture just about everything, exactly because of how cheap it is to process and maintain such information.

So you should capture:

Raw inputs — like meetings, brainstorming docs, things where you are diverging, not converging.

Decisions — e.g. ADRs in tech, but also product insights, directions you decided to go, and all the whys that the AI can’t figure out by itself by simply looking at the what.

What exists today — I call these recap docs, because I believe they should be built as summaries of smaller, atomic things. You can put together a big ARCHITECTURE.md doc as a combination of the ADRs, or the product version of it by combining PRDs and specs. This is 100% AI territory today: you provide human input about single features, but leave the plumbing around content orchestration to the AI.

All this stuff needs workflows to be created to capture data, process it, keep it in sync, and so on — but honestly, it’s all easy! We are engineers! And the leverage over time is incredible. So there is zero excuse not to do so.

🔨 Building the right thing

If you do the above right, the result will be building more right things, as opposed to wrong things:

Things that build nicely on top of what already exists

Things that are coherent with past decisions

Things that work and do not degrade your codebase

Above all, things that actually improve your codebase — because they record more decisions, say no to the things that are wrong, and yes to those that are right.

And those no’s and yeses stay there to help make better decisions in the future.

Also, such compounding can and should happen in all of your environment, not just the codebase: build history, pipeline patterns, team context, baked in and available over time, so agents can validate more, debug better, assess if code runs, and, most of all, if they are building the right things.

🎽 Empowering managers

I want to close by spending a few words about managers, who are often neglected in this AI conversation.

“Managers are in trouble”, “AI is for builders”, “everyone is back to coding”, and so on.

Guys, all the stuff we discussed is peak manager work.

I have interviewed a lot of engineering managers on the podcast, and often asked them what’s the hardest part of their job. One of the common responses is: “the feedback loop is slow”.

Think about it. When you are an engineer, your feedback loop is: you write a feature, see that it works, if not you iterate, push it, possibly iterate on reviews, and it’s done. This is all extremely fast (even if it might not feel like so!).

Now compare it with the feedback loop of a manager who is trying to improve how sprint planning works. Or performance reviews. Or their team’s velocity. Any change you make to the process needs to be measured on a scale of several weeks, or months. And in many cases, what do you even measure?

Agent workflows are amazing for managers to operate because it’s like their normal work… on timelapse. Even if the agents are not that good, make mistakes, or trip over themselves, it doesn’t matter as long as they are fast and managers can course-correct quickly.

So if it is true that all engineers are becoming kinda like managers now, it’s also true that motivated managers can have some upper hand because, well, they are already managers!

And that’s it for today! I wish you a great week

Sincerely 👋

Luca

The uncomfortable implication here is that AI doesn’t just make engineering faster. It makes organizational debt visible. A lot of teams will use agents to accelerate the same broken loop: vague specs, tribal knowledge, rushed PRs, weak validation, then another layer of abstraction nobody fully owns.

The real shift is that engineering management starts to look more like capital allocation. Every change either increases the future option value of the codebase or consumes it.

Very nice!

Would love to hear your thoughts on how managers within an organisation of couple of hundreds of engineers need to switch gears. (Direct line managers up to VP level)

How to get the mid 70% going with new AI workflows instead of only top 15%.

How to get these new processes in place on very large and mature organisations and what are these step by step changes. Etc..