Last month I published The Era of the Software Factory, where I commented on the latest State of Software Delivery together with Rob Zuber, a good friend and CTO of CircleCI.

In that piece we argued that software engineering was undergoing a transformation from craftsmanship to factory work, with the gap between elite and average teams being more and more the result of good control systems and tight feedback loops, as opposed to individual brilliance.

Some will argue it has always been the case. Chasing 10x engineers has always felt like a dubious idea, with the healthy version being: let’s build a 10x team, instead.

Whether or not it felt true in the past, it certainly feels true now. The report showed that elite teams today — those that are getting the most out of AI — are exactly the ones who already had the best delivery infrastructure yesterday (i.e. 3 years ago). Today, these teams are both running their “factory” faster, and improving it faster. They are going faster than others, and accelerating faster, too.

So how do they do that? What does getting better look like? How do you even know it’s happening?

Let’s get practical and try to bridge this gap. Here is the agenda for today:

🧮 DORA metrics are not enough — we need leading indicators of AI success

📊 Specs, rules, and modules — a simple mental model for AI leverage in three steps

🪴 Making things compound — how to make things happen on your team

Let’s dive in!

I am grateful to Rob and the CircleCI team for partnering again on this piece. You can learn more about CircleCI below.

Disclaimer: even though CircleCI is a partner for this piece, I will only provide my unbiased opinion on all practices and tools we cover, CircleCI included

🧮 DORA metrics and LOCs are not enough

From the report above, we know there is a small group of teams leading the pack, with outrageous numbers like 2-3x throughput, and a large group of others that, on average, are getting nothing out of AI. Literally nothing. Zero. Nil.

And not because they are not trying! AI penetration with engineers is ~90% — basically everyone is using AI everyday for coding, but the median team is not getting any faster.

How do we know this? Because as of today, in 2026, we have a good understanding of how to measure the effectiveness of the software delivery process. For example, we can look at the DORA metrics. Whether or not you use AI in the dev process, we can track how long it takes for work to go through the pipeline, how many commits are made per day, what’s the success rate of these commits, and how fast you recover from mistakes.

These numbers give us a good idea of the health and effectiveness of the delivery process, and allow us to even venture into saying: “this team is doing better than this other team!”.

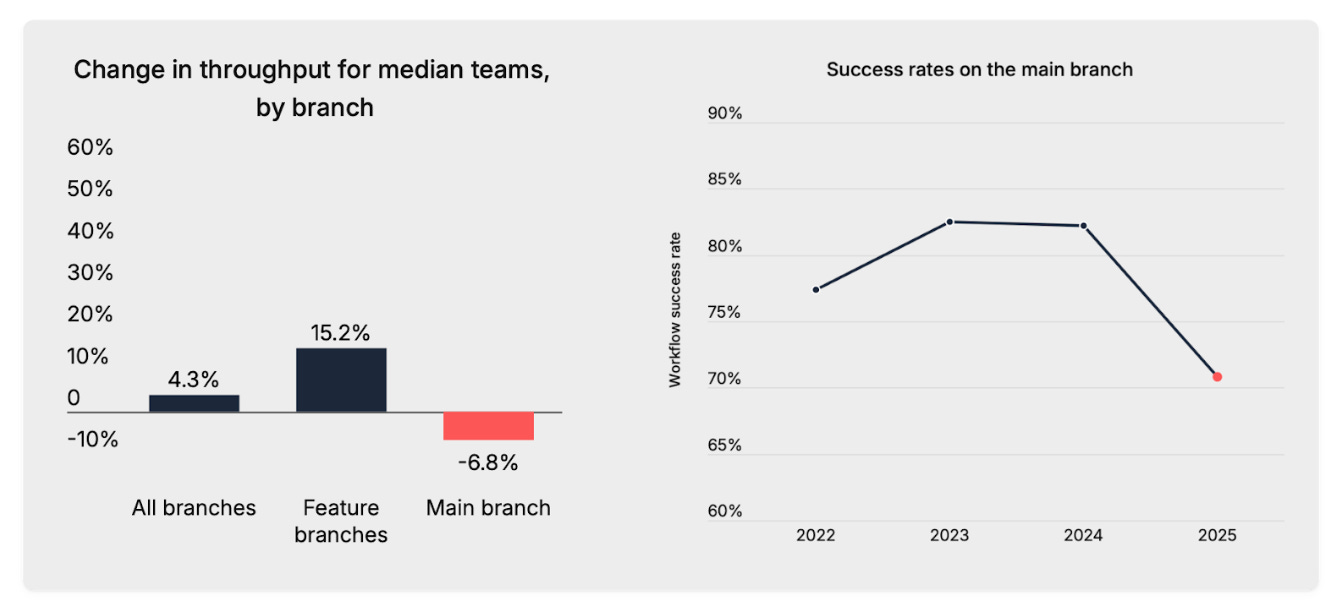

Sometimes they also allow us to say: this team is not doing any better than before — which is what CircleCI found on average in 2025:

-6.8% commits in production

-14% deployment success rate

DORA metrics are effective at measuring the impact of AI precisely because, counterintuitively, they ignore AI. They look at the output of the process, regardless of how the process is implemented.

This is both a blessing and a curse.

It’s a blessing because they stay close to where the value is, and it’s a curse because they are too high level to be directly actionable. Just like setting a goal of increasing business revenues by 10% doesn’t say much about what you need to do to get there, setting direct DORA metrics goals isn’t very useful, and can even backfire if you are not careful with it. E.g. it’s easy to increase the number of commits per day by splitting work into artificially small batches, and shipping just a few lines of code at a time just for the sake of pedaling faster.

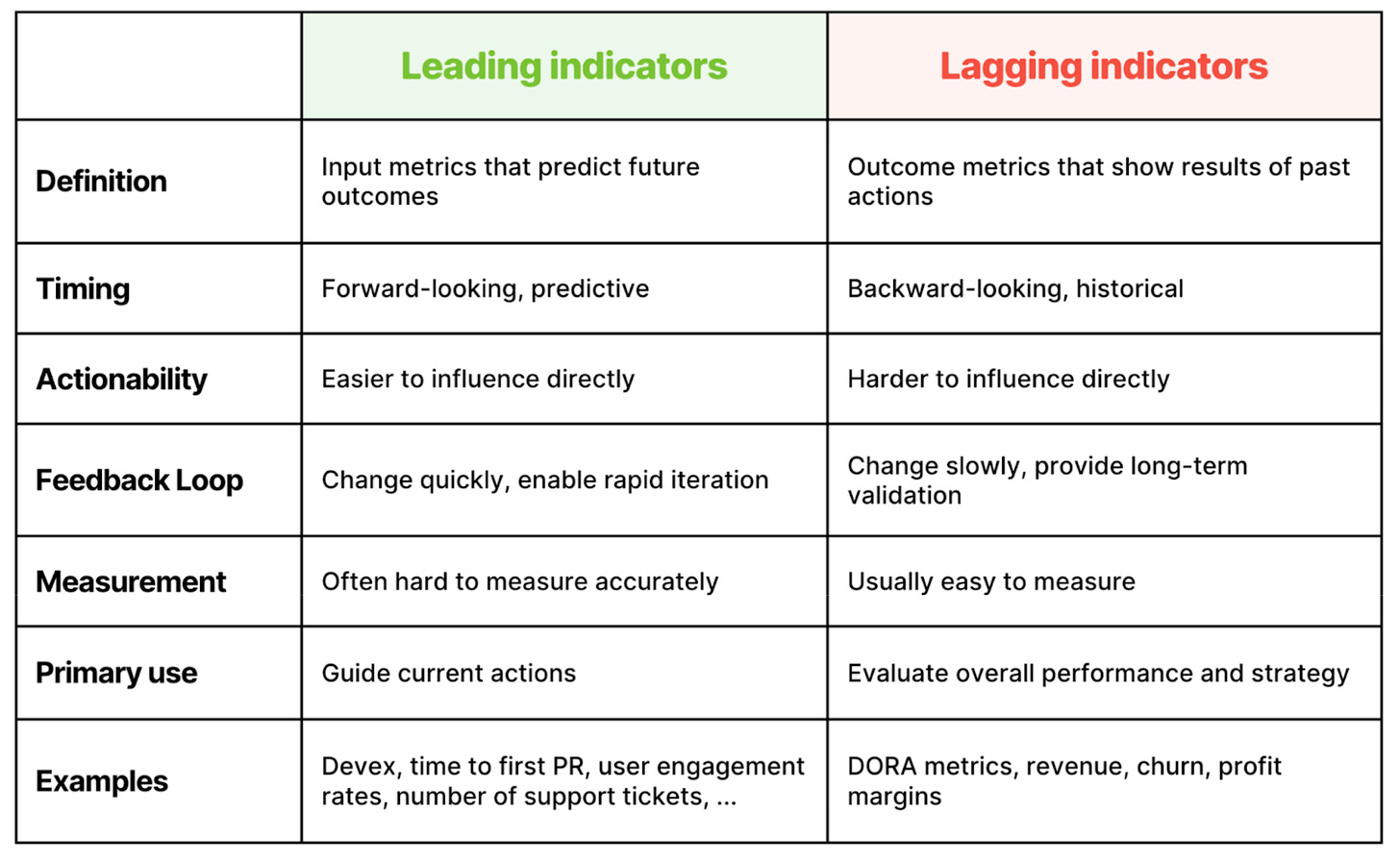

So, DORA metrics are lagging indicators. They are good for assessing the production level of your factory, but they don’t tell you how to improve your stations. For that, we need leading indicators.

The most convincing leading indicator for the SDLC is developer experience. There is plenty of research today that shows that improving developer experience has outsized returns on the lagging indicators not only of the health of your engineering process, but of the business as a whole. This was already the core takeaway from Accelerate, exactly 10 years ago, and has only been confirmed ever since. About this, I particularly liked Frictionless recently, by some of the same authors of Accelerate.

Developer experience, though, doesn’t say much about how we are using AI.

What are the leading indicators of AI success?