Hey, Luca here, welcome to a weekly edition of the💡 Monday Ideas 💡 from Refactoring! To access all our articles, library, and community, subscribe to the full version.

🗳️ Fill out a quick research survey and win $100

This is sponsored by… a startup in stealth mode! 😅

Share your experiences about the alignment of Product and Engineering organizations in the quick survey below. 10 randomly selected participants will get a $100 Amazon gift card!

1) 🎽 The Big Three Behavioral Questions

A couple of weeks ago I published my interview with Austen McDonald, former hiring committee chair at Meta, about behavioral interviews.

Previously we also published a guide together, where we explored 1) why behaviorals today matter more than ever, and 2) how you should prepare for them.

For the latter, there are three questions I’m very confident you will hear in some variation in any behavioral interview, so it’s worth preparing for them explicitly:

1.1) Tell me about yourself 🙋♂️

This is so ubiquitous it has its own acronym — TMAY (pronounced tee-may).

Your goal is to break the ice, leave a great first impression, set up context for the rest of the interview, and steer the conversation toward topics that showcase the best of your career. Here’s a structure that works:

Personal Summary — Start with a couple of sentences introducing yourself: your role, years of experience, and what you specialize in.

Accomplishments — Highlight 2–3 key achievements, ideally recent ones, that show strong business value and technical difficulty.

Forward-Looking Statement — End with a sentence connecting your past to the role you’re pursuing. Wrap it up and hand the conversation back to the interviewer.

This should take 1–2 minutes max.

1.2) Tell me about your favorite / most impactful project 🔨

This often comes right after TMAY, giving you the opportunity to double down on your impact. When picking what to talk about, choose the project with the most impact, scope, and personal contribution by you — regardless of how the question is phrased.

If you’re talking about a project of meaningful size, structure your response by grouping your actions around themes, then signposting those themes clearly as you speak. This makes a complex story easy to follow.

1.3) Tell me about a conflict you resolved ⚔️

For this, pick a story where the stakes were high and you were highly involved. The interviewer wants to hear about the behaviors you demonstrated — how you navigated the situation, not just what happened. And it goes without saying, unless you are really confident in your storytelling, pick a story where you ended up being right.

You can find the full guide below 👇

2) 🔨 AI should improve your code quality

Code quality is the #1 concern engineers have about AI. In fact, the dominant narrative is: AI will make you ship more code in less time, but you’ll have less control over it, and it’ll be riddled with bugs.

This can be true — but it’s not inevitable, and I don’t think it’s the best way to use AI.

What’s often overlooked is that AI is excellent at reasoning about existing code, which is at the core of reviewing and improving code. AI is fantastic at picking up code smells, security vulnerabilities, missing tests, and even team-specific issues like adherence to your own best practices and conventions.

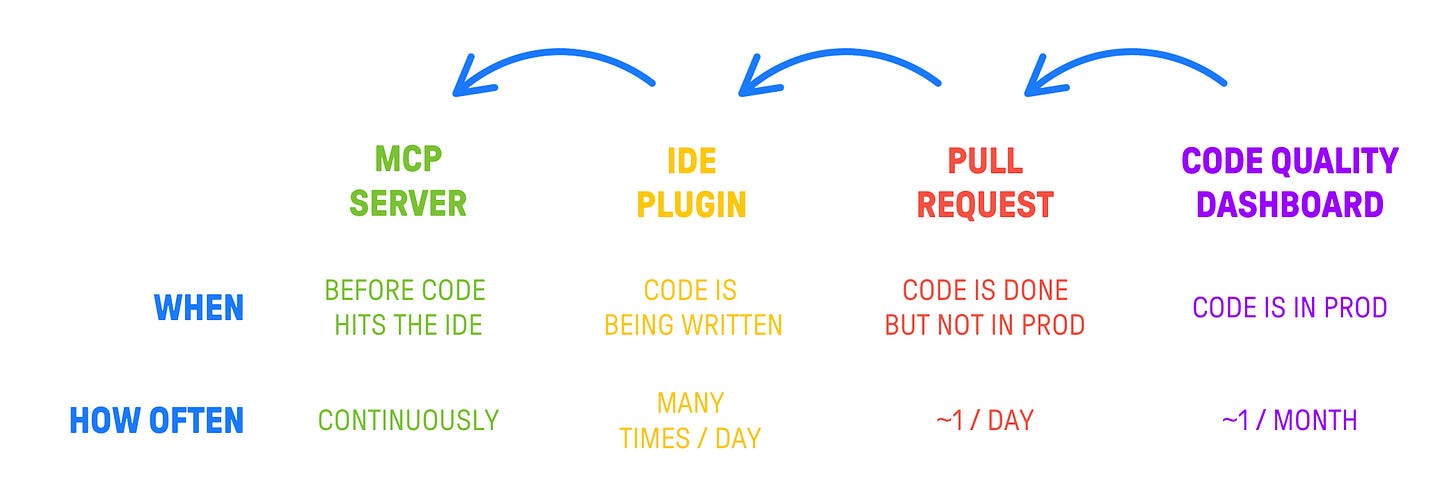

What’s also interesting is that AI is shifting code quality further left. Many static analysis checks that previously lived in CI/CD are now real-time suggestions in the IDE. Some have even become MCP servers that can steer AI-generated code before you even save the file.

So, some of the best code quality tools, like CodeScene, Codacy, or Packmind, allow you to enforce code quality on standards possibly higher than how you would ever be able to on a 100% human team.

I also talked about this in relation to my own experiments, for which I am able to keep ~9.5/10 code quality, via CodeScene.

So, if you ask me, the future of code quality is bright — but only if you’re intentional about using AI for that purpose, rather than treating it purely as a code generator.

This is just one of the ways in which what’s good for humans is good for AI, which I explored in this article late last year 👇

3) 🎙️ Standardization vs. flexibility

One of Rob Zuber’s biggest regrets as CTO of CircleCI was the lack of early standardization — which became extremely expensive to fix later. When we spoke a couple of months ago on the podcast, he drew a sharp line between decisions that are cheap early and expensive later, and those that remain cheap throughout.

So, a few things that should be standardized are:

💻 Programming languages — avoid the “auth service written in a language no one knows” problem.

🔧 Core infrastructure patterns — database connections, queuing, observability.

📋 CI/CD processes — critical for compliance, auditability, and as the main crossroads of your dev process.

What can stay flexible:

🖥️ Developer environments — IDEs, terminal setups, and dev stuff that is up to individual preference.

📅 Some agile practices — as long as business and stakeholder needs are met!

“If you’re good at providing the things that your stakeholders need before they know they need them, you’ll have all kinds of freedom to make your own choices. If you aren’t, people will come make choices for you.” — Rob Zuber

Rob argued that excessive standardization often emerges defensively, when engineering teams fail to communicate well with their stakeholders. So the real solution most of the time is not more rules, it’s just better communication.

Here is the full interview with Rob Zuber:

You can also find it on 🎧 Spotify and 📬 Substack

And that’s it for today! If you are finding this newsletter valuable, subscribe to the full version!

1700+ engineers and managers have joined already, and they receive our flagship weekly long-form articles about how to ship faster and work better together! Learn more about the benefits of the paid plan here.

See you next week!

Luca

It's always a pleasure reading you, thanks Luca! <3