About a month ago I wrote an article about my AI coding workflow, and it instantly became the most popular Refactoring article of all time.

I received a lot of private comments, most of which can be organized into two categories:

People wanting to learn more about the coding workflow itself.

People wanting to learn more about the note-taking app I am building.

Both types of comments motivated me a lot, to the point where I decided to make all of this an official part of my Refactoring work, as follows:

I am going to build this app for real, make it free and open source for everyone, and keep working on it as an ongoing effort.

I am going to report my learnings about AI coding as part of the regular writing schedule, let’s say once a month or so.

I am convinced this is the right thing to do because, frankly, getting AI coding right is the #1 problem in software engineering today, and it’s not even close.

The economics of software are changing so much that there is no part of the process that is safe. Code reviews, QA, planning, staffing, traditional management — everything feels up for grabs and downstream of what happens with coding.

Also, while I love doing research and talking with others (e.g. on the podcast), opinions are so scattered across the board that I feel the strong need to try things myself, and do so in a serious, ongoing way.

So here is today’s agenda:

💧 Building Tolaria — a personal knowledge management app for the age of AI.

🦞 OpenClaw vs Claude Code — how I use them, and to do what.

🔧 Coding workflow — what changed vs one month ago, and what stayed the same.

📑 ADRs — the best recent addition to my coding.

🎨 Product workflow — how I create specs.

💸 How much I am spending — because I know y’all want to know that.

🤖 CLAUDE.md — bonus: my current Claude file, attached in full.

Let’s dive in!

💧 Building Tolaria

In the last article I said that what I was building didn’t matter much, well now it kinda does, because you are going to hear a lot more about it in the future.

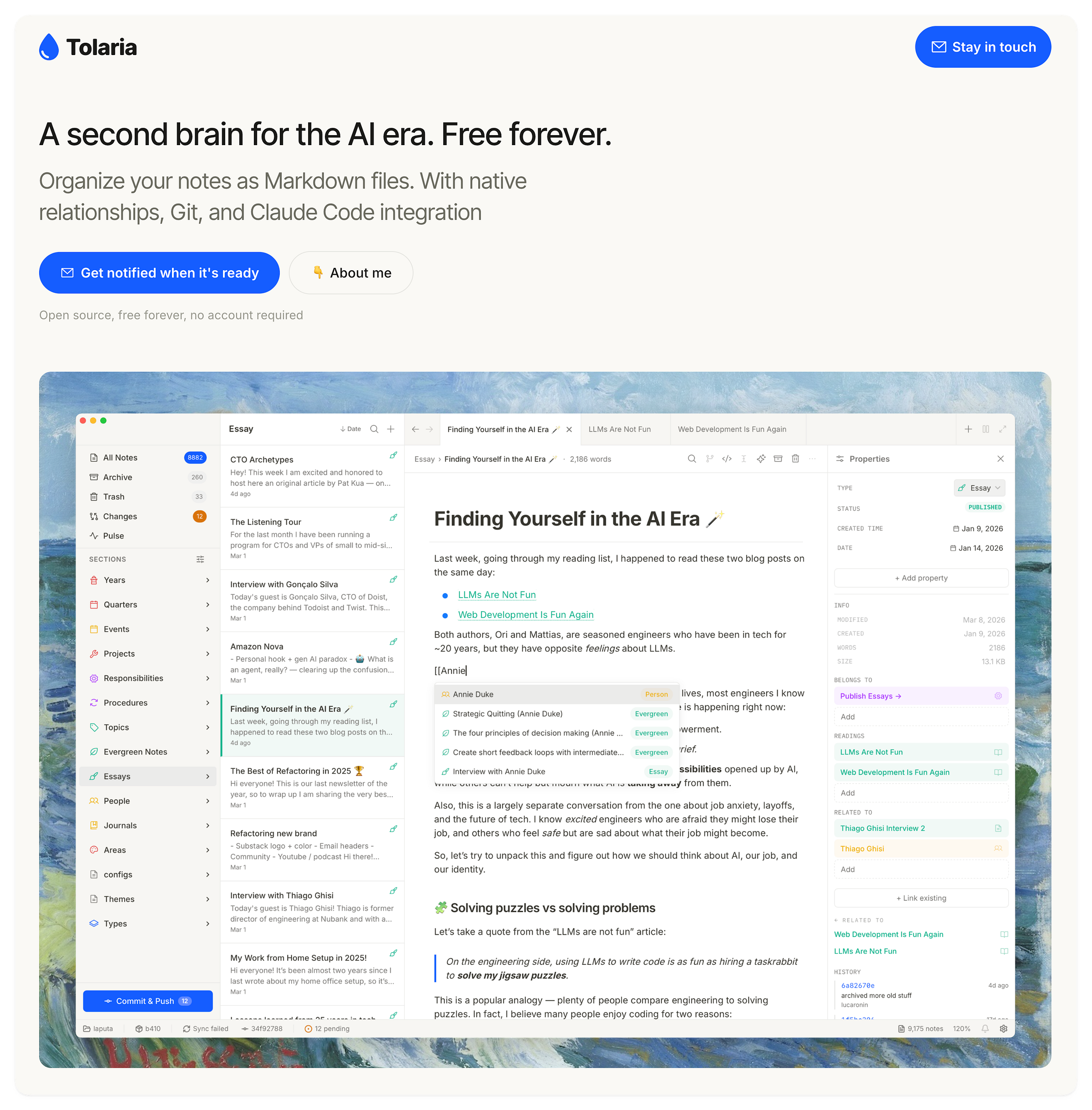

Tolaria is a personal knowledge management app, extremely opinionated and tailored for my own personal use, but I hope it can be useful to others too.

It’s worth noting that this is not a particularly original idea. There exist plenty of tools in this space — Notion, Obsidian, Craft, Roam, and more — and most of them are very well made. Still, I know plenty of smart people who lately have been experimenting with weird setups.

Just last week, Karpathy wrote a viral tweet exactly about that.

Why such a renewed excitement around knowledge management? Because everyone who tries to work seriously with AI agents eventually understands that this is a core problem to solve: continuously supplying agents with reliable, up-to-date information about your life and work, and creating a good collaboration surface on these.

I suspect this still feels a bit abstract, so I’ll make a few examples from my own work:

An agent should periodically fetch meeting summaries from tools like Fathom or Fireflies, create a note for the meeting with the summary and action items, and link the note to individual attendees (each of whom should have a page for themselves). If there are attendees who are new, the agent should create individual notes for each of them, enriching my personal CRM.

An agent should receive voice notes from me and turn them into long-term notes and tasks, and connect them to the relevant existing pages.

An agent should take every new article I write and split it into atomic evergreen ideas, connect them to similar ideas I have already saved in the past, and better organize my knowledge base so that it’s easier to write new articles in the future.

An agent should suggest new article topics and podcast guests based on my recent readings and how these connect to things I have written in the past and interviews I already did.

In terms of intelligence required, most of these tasks are trivial. But they need a good collaboration surface 1) for agents to fetch the relevant info and 2) for me to work well with them.

Tolaria is my attempt to build this for myself first, and hopefully for some others too.

It will be completely free and open source, so that it also works as a visible artifact of my understanding of how to create products with AI, for other people to inspect.

At any given time, if you want to know e.g. what I write in my CLAUDE.md, or how I write docs, or what types of tests I write and which I don’t — you shouldn’t just trust my articles, you should be able to go to the repo and see for yourself.

This way, the ideas you read on Refactoring become the result of three things:

📚 Research — I read and collect experiences from others.

💬 Conversations — I go deeper by running community events and 1:1 podcast interviews.

🔧 Personal work — I do coding and product development myself to stress-test these beliefs.

All of these elements are meant to be public: you can get weekly digests of what I read, listen to the podcast conversations, join the community to chat with me (and others), and inspect the Tolaria codebase (soon!) to see how I write code.

Tolaria is not ready yet but it will be in a couple of weeks!

So where are we today? Here is an exec summary. Since I started working on it around mid Feb, here is what I got:

1526 commits — that is ~30 commits/day on average

~70K LOCs — about 45 lines / commit

~3000 tests — about 85% coverage

9.5/10 code health — as measured by CodeScene

40+ ADRs — to track our core tech choices, plus three summary docs for Architecture, Abstractions, and Getting Started.

That’s 2.5x the amount of code we had last month (we were at 20K LOCs), with better code health (9.5 vs 9.3), and better docs. I am still not writing any code, and I am still reading very little of it.

The product also got way better to the point where, by now, I have fully replaced my Notion workspace. It still feels surreal to say that out loud, after 6+ years of really intensive Notion usage, but here we are.

So let’s get into how I work on it, starting with coding 👇

🦞 OpenClaw vs Claude Code

In last month’s post I said that basically I acted as CEO, OpenClaw worked as a PM, and Claude Code worked as a team of designers + engineers.

Has any of this changed? Yes, and no. Roles are still kinda the same, but I am not using Claude Code strictly together with OpenClaw anymore, in the sense that the latter directs the former, but more in an async way. This change has been driven by three reasons:

💸 Cost — the initial workflow in which OpenClaw steered CC all the time was wildly inefficient in terms of token spend.

🎓 Capabilities — CC has caught up with a lot of OpenClaw stuff. Most of all, it has loops now.

👮♂️ Legal — most recently, Anthropic has explicitly banned this type of usage, but to be fair I had stopped it already.

So by now my OpenClaw and Claude Code work on separate things, in a completely asynchronous way:

OpenClaw turns my inputs into product specs, checks on the general status of things, brainstorms new ideas, and in general does the work that benefits from broad context.

Claude Code does all the technical work, including coding, writing ADRs and docs, and, crucially, QA, that was done by OpenClaw before.

OpenClaw doesn’t interact with Claude Code anymore, but it periodically checks that it doesn’t get stuck or crashes (which can happen for a variety of reasons). In that case, it restarts it.

🔧 Coding workflow

This article is largely meant to work as a delta vs my original AI coding article to highlight things that have changed, but it’s also worth noting the good stuff that has not changed.

The highest leverage parts of the AI coding workflow are still probably the CI gates on code health and test coverage. These gates exist in two places: