Hey, Luca here, welcome to a weekly edition of the💡 Monday Ideas 💡 from Refactoring! To access all our articles, library, and community, subscribe to the full version.

How Augment Code is going AI-native

Today’s sponsor is Augment Code!

The guys at Augment have been documenting how they are transforming their engineering team to be AI-first, in several great blog posts:

They would also love to hear from other leaders going through the same thing, and have been running a quick survey for this. You can find it below 👇

💡 Just ask engineers

By now, as of 2026, there is solid consensus that good developer experience is upstream of how your engineering team performs as a whole. And good devex is, in turn, mostly about removing friction from the daily work of engineers.

To remove friction you first need to know where it hides. What are the biggest blockers for your team? What is slow, hard, or simply a waste of time?

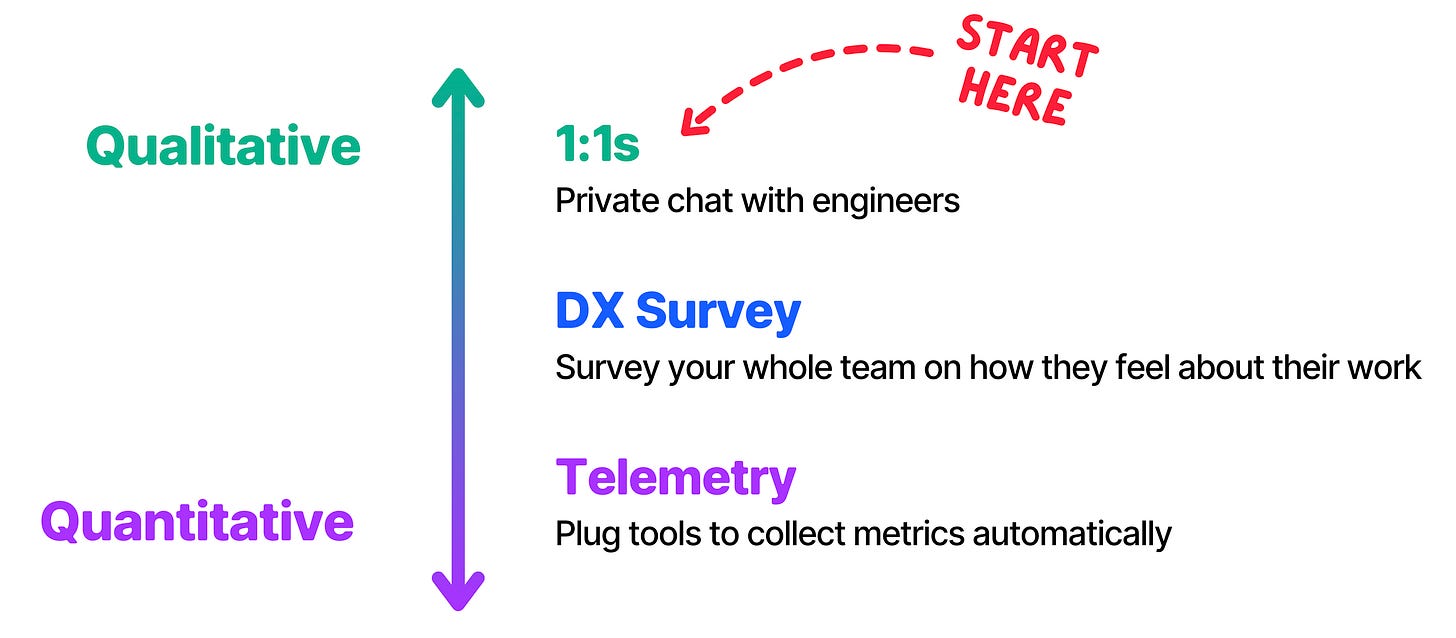

As engineers, we have a natural bias towards telemetry — quantitative data taken automatically from our processes, so we can debug it as if it were software. This is increasingly backed by research, from Accelerate onwards, and I have written extensively about it:

To summarize what I think about metrics: they are good, but they are not enough. Most metrics are cues that something is (or is not) working, but they don’t tell you what the problem is. They are lagging indicators: to find the actual blockers, you need to dig deeper.

How? The answer is usually deceptively simple: ask engineers. They know.

If you combine qualitative signal from engineers’ opinions with quantitative data from your systems, you’ll be unstoppable. But counterintuitively, most managers find it harder to do the former—have good conversations to identify problems—than the latter.

So, I am a fan of doing the so-called Listening Tour, whose name I lifted from Frictionless, but whose execution I tweaked based on my own experience. I wrote a full piece about it at the start of the year, and I still stand by it 👇

🎙️ Past behavior predicts future results

With tech chops getting a bit diluted by AI, behavioral interviews have recently gotten into the spotlight as a crucial way to select good engineers. Always have been? Probably — but today, even more so.

I recently talked about this on the podcast with Austin McDonald, former hiring committee chair at Meta. Austin said behavioral interviewers are essentially forecasters: they study your past performance to predict your future results. And, with people, this generally works (unlike with financial portfolios!)

Austin breaks down what interviewers look for into signal areas:

🎯 Scope & ownership — how large a project can you handle, and do you drive it forward without being asked?

🌫️ Ambiguity & perseverance — how do you navigate unclear situations and push through setbacks?

🤝 Conflict resolution, communication & leadership — how do you handle disagreements, convey ideas, and rally teams?

These signal areas map closely to most company values, but not always one-to-one. Newer AI companies like Anthropic, for instance, look for safety-oriented signals about thinking through the implications of building AI.

So Austin recommends combining general areas with company-specific research—their website, recruiter conversations, YouTube talks—to build a complete picture of what you’ll be assessed on.

“Every question they ask has this kind of thing behind it. Especially follow-up questions. People often forget — when they get a follow-up question, they just think the person wants more information. Instead, think: why are they asking me this?”

In fact, companies exist on a wide spectrum:

Amazon sits at one extreme end with 16 rigorously enforced leadership principles.

Meta is in the middle with five structured behavioral areas.

Google has traditionally leaned more technical.

Here is the full interview with Austen:

You can also find it on 🎧 Spotify and 📬 Substack

📚 Weekly Readings

Finally, here are the best articles I have read this week:

🥇 The Git Commands I Run Before Reading Any Code

3 min • by Ally Piechowski

Great piece that makes you understand the power of version control history. There is so much you can understand about a codebase (and a team) by running simple git commands. Hotspots, bus factor, bug clusters, crisis patterns — all from git history.

🥈 Feedback Flywheel

10 min • by Rahul Garg

I really liked this article — it’s very practical and proposes some novel angles to AI feedback. Every AI interaction generates signal: prompts that worked, context that was missing, patterns that failed. Rahul proposes a structured practice that takes learnings from AI sessions and feeds them back into the team’s shared artifacts.

🥉 The Human Weight of It

7 min • by Cate Huston

Can Claude replace coaching? Cate has been experimenting: using AI for structured issues, but keeping her human coach for the messier stuff. AI is great when you need structure and validation. But when you need to feel seen, you need the human weight of someone else’s confidence in you. I loved it.

And that’s it for today! If you are finding this newsletter valuable, subscribe to the full version!

1700+ engineers and managers have joined already, and they receive our flagship weekly long-form articles about how to ship faster and work better together! Learn more about the benefits of the paid plan here.

See you next week!

Luca